Gül Sena Altıntaş

Hello, I am Gül Sena (/ɡuːl se’na/) ! I am a second year Computer Science PhD student at UofT working with Colin Raffel. My current research interests lie in the intersection of multilinguality and modularity in language models. I am particularly interested in how we can move away from one-big-size-fits-all architectures toward systems that adapt efficiently across diverse languages and domains. These days I am thinking a lot about tokenization and input representations in language models; how they relate to multilingual, cross-domain and cross-modal abilities.

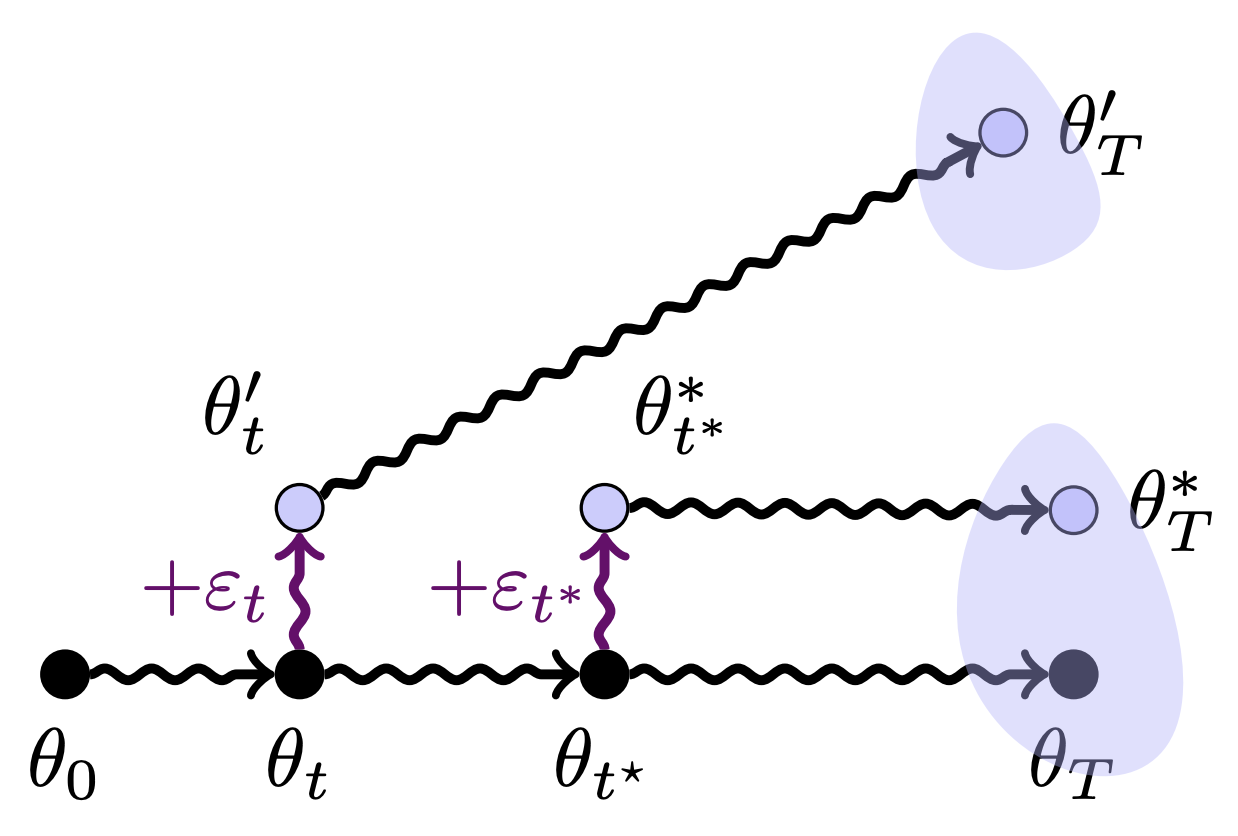

Even though I am no longer working in this area, I am also fascinated by the literature on linear mode-connectivity and loss landscapes especially their implications for learning theory, decentralization and modularity.

Prior to my PhD, I completed my masters at ETH Zürich and my bachelors at Koç University. I enjoy teaching. In my free time I like rowing, running, and cooking.

news

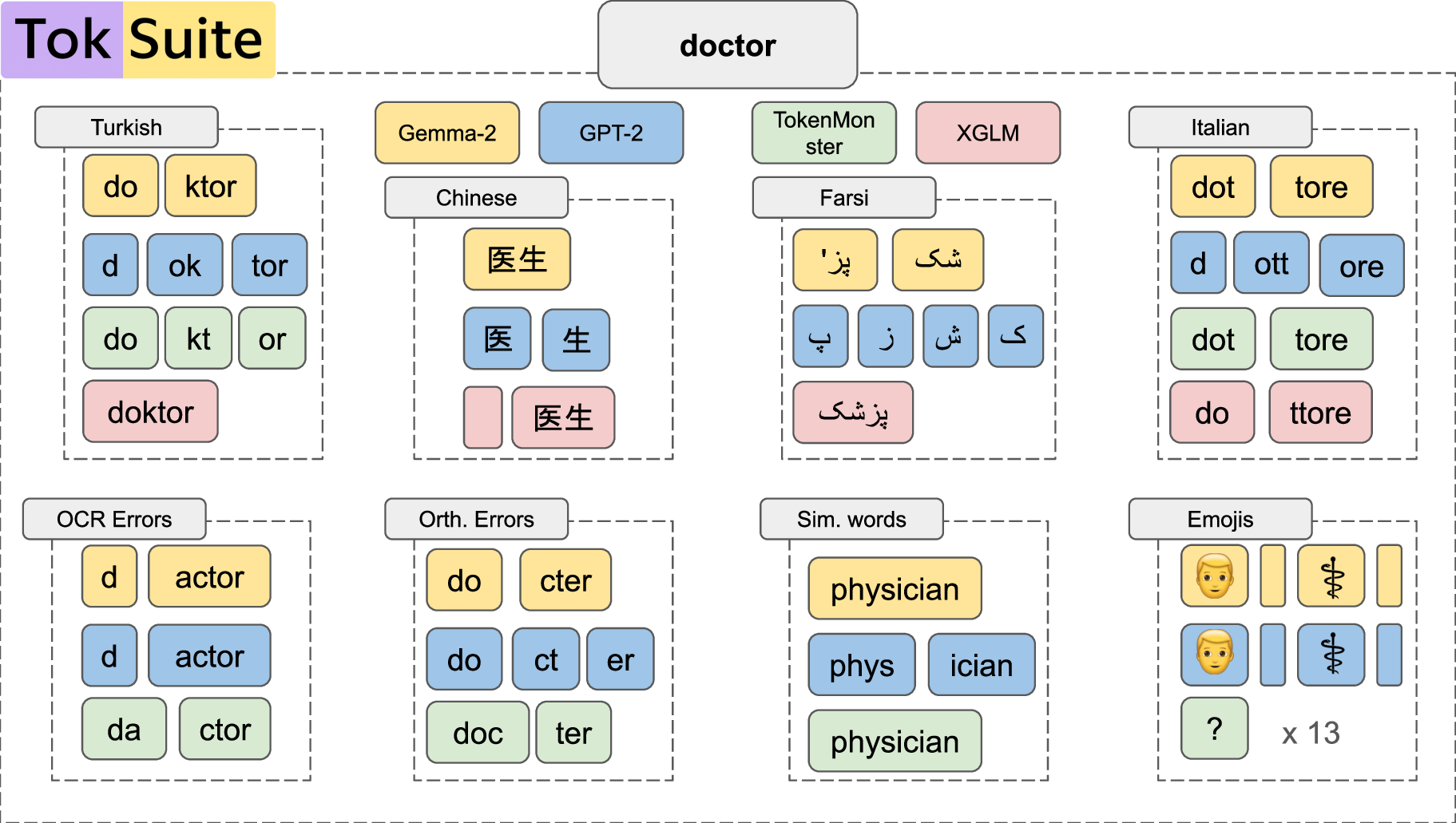

| Dec 30, 2025 | Our preprint TokSuite: Measuring the Impact of Tokenizer Choice on Language Model Behavior is on ArXiV. |

|---|---|

| Jun 02, 2025 | Our full paper on the Butterfly Effect in neural network training dynamics will appear in ICML 2025. |